How MemGQL Lets You Query Distributed Data Without ETL

Your data probably doesn’t live in one place. Some of it is in Postgres. Some in ClickHouse. Some in Iceberg. Some in Memgraph. Moving all of that into one system sounds simple in theory, but in practice, it can be expensive, slow, and hard to justify.

That is the idea behind Memgraph Zero, Memgraph’s new product line built around a simple principle: your data should stay where it is, and you should still be able to query it as a graph. The first component in that product line is MemGQL.

In our latest Community Call, Introducing MemGQL: One Query Language Across All Your Data Sources, Memgraph CTO and co-founder Marko Budiselic introduced MemGQL, explained how it fits into Memgraph Zero, and walked through live demos across multiple data sources.

If you missed the live session, you can watch the full MemGQL Community Call recording. The session goes deeper into the architecture, demos, current limitations, and audience Q&A.

Below are the key takeaways.

Key Takeaway 1: Why Build Memgraph Zero

The session started with the problem Memgraph Zero is built to address: copying data is often the wrong default.

Large organizations rarely have one clean database. Data sits across operational systems, analytical stores, graph databases, file formats, and APIs. Some of it is hot. A lot of it is cold. Moving all of that data into one place introduces infrastructure cost, engineering work, and ongoing maintenance.

If ETL can be avoided, it should be. That does not mean centralization is useless. Teams still need a centralized way to ask questions across silos. The issue is that physically centralizing the data is not always the best path to centralized access.

This becomes even more important with AI agents. Agents need to fetch data, but they also need to share what they discover. If one agent spends tokens finding the right data or building useful context, another agent should not have to repeat the same work. Memgraph Zero is partly about making that feedback reusable.

GQL also makes the timing important. As graph workloads move across databases, applications, and agentic systems, compatibility with a standard graph query language becomes less of a nice-to-have and more of an architectural requirement.

Key Takeaway 2: What Memgraph Zero Is and Where MemGQL Fits

Memgraph Zero is the umbrella product line. MemGQL is the first component introduced under it.

The name matters. It is called Memgraph Zero because the core idea is zero ETL. Keep data where it is. Query it as a graph when that is the right model.

Why graphs? Because graphs are a natural way to model complex data. As soon as you need to connect multiple sources, you usually end up drawing entities and relationships anyway. A graph gives that whiteboard model a queryable structure.

That is especially useful when the data comes from more than one place. Instead of forcing every backend into one storage system, Memgraph Zero lets you model those backends as a graph and expose them through one endpoint.

Marko also tied this to the way agents work today. Most agents are still built for “single-player mode.” They operate alone, touch tools independently, and rebuild context as they go.

Memgraph Zero is designed for a more “multi-player” mode, where agents can use a shared data layer and reuse findings across workflows.

GQL is the interface that makes this direction practical. It is a long-term graph query standard, and it is close enough to Cypher to fit naturally with Memgraph’s current graph model.

MemGQL is one part of that broader initiative. Other parts mentioned in the session include the Mapping Agent, MCP, Kubernetes-related work, and future product capabilities.

Key Takeaway 3: How MemGQL Is Implemented

Under the hood, MemGQL is a federated GQL engine implemented in Rust. It uses the Bolt protocol in front because Bolt already fits the graph ecosystem. That means existing tools can connect without forcing developers into a new workflow.

<Embed this YouTube Short here>

Examples include:

mgconsole- Memgraph Lab

- Bolt-compatible application drivers

- Programming language clients already used in graph workflows

The idea is practical. If developers already know how to connect to graph systems through Bolt, MemGQL should feel familiar.

Another important part is agentic mapping. Instead of making users manually define every mapping from SQL tables to graph nodes and edges, MemGQL can use an agent-assisted workflow to accelerate that process.

Much of relational to graph modeling is deterministic. Tables often map to nodes. Join tables often map to relationships. Foreign keys give strong hints about edges. There will still be edge cases. But agentic mapping can get users to a useful first model faster than starting from a blank file.

Memgraph Zero is also being designed around off-the-shelf connectors. The current and near-term focus is SQL and NoSQL because those systems already have storage and execution. The broader direction includes structured, semi-structured, unstructured, REST, GraphQL, and API-based data sources.

Not all of that is available today. Semi-structured and unstructured support is a goal, while current support is stronger around graph and SQL-oriented backends.

Key Takeaway 4: How the Architecture Works in Practice

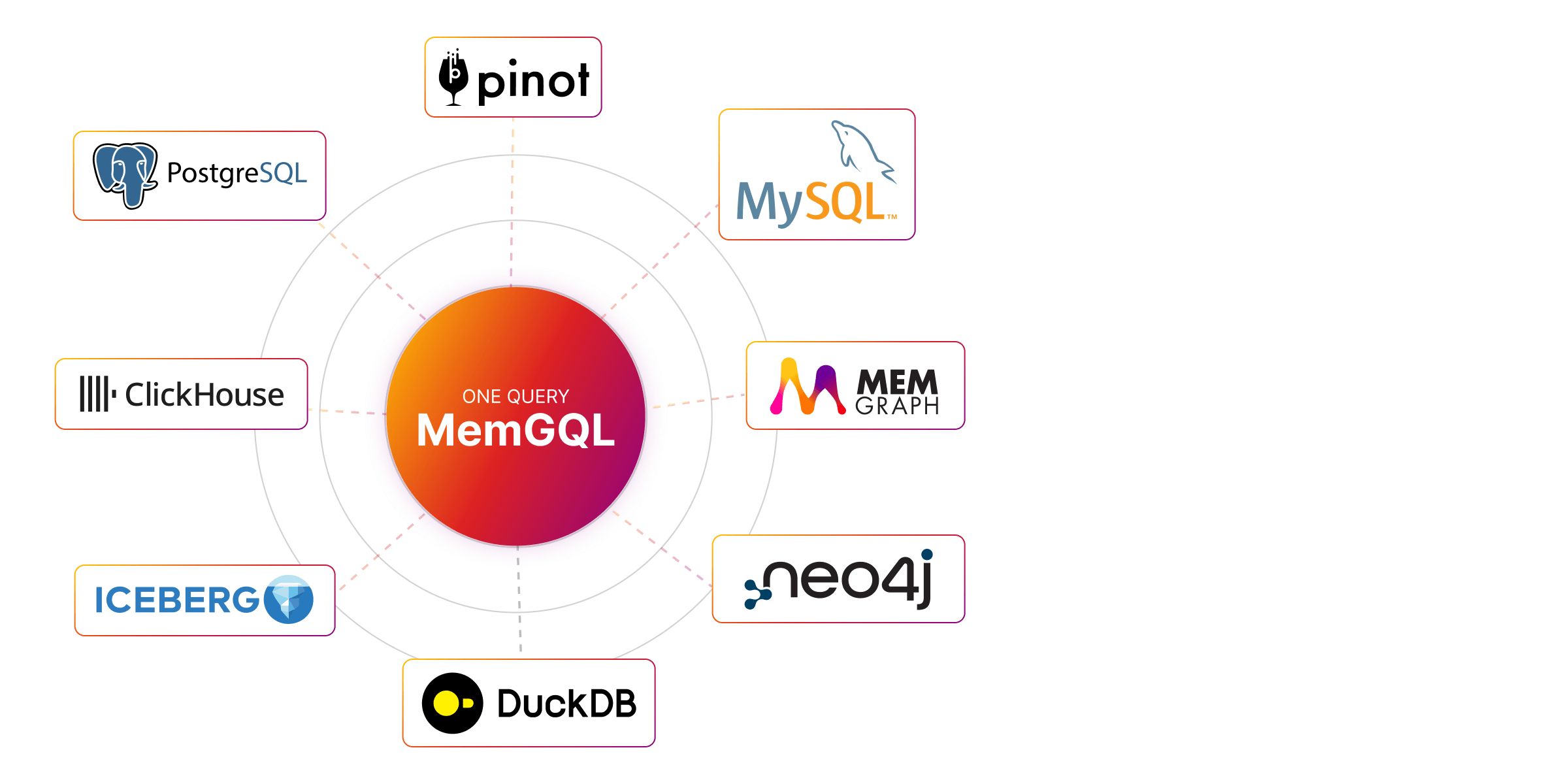

The architecture is centered on a virtual graph. MemGQL sits between the query client and the source systems. On one side, you have Bolt-compatible clients and agents using MCP. On the other side, you have systems such as Memgraph, Postgres, ClickHouse, Iceberg, DuckDB, MySQL, Apache Pinot, and Neo4j.

The important detail: data is not copied by default.

MemGQL translates and plans the query, then pushes execution down to the target system where possible. For graph backends, that means translating GQL into Cypher. For relational backends, that means translating GQL into SQL based on a graph mapping.

In simple terms:

- A GQL query comes in.

- MemGQL uses the configured graph and mapping information.

- It translates the query into the backend-native language.

- The target system executes the query.

- MemGQL returns the result to the client or agent.

For Memgraph as a backend, the mapping is almost direct because Memgraph already uses a property graph model. For SQL systems, the mapping layer is required because tables and relationships need to become graph nodes and edges.

Marko also mentioned caching as a future direction. Memgraph is a natural fit there because it is an in-memory graph database and can act as an execution or caching layer when data needs to be pulled closer for graph workloads.

Key Takeaway 5: What Works Now and What Comes Next

The current feature set includes GQL to Cypher translation, with Memgraph and Neo4j supported as graph backends. GQL to SQL is also available, although Marko made it clear that SQL coverage is still earlier than Cypher coverage.

The connectors mentioned in the session included:

- Memgraph

- Neo4j

- Postgres

- DuckDB

- Iceberg

- ClickHouse

- MySQL

- Apache Pinot

The near-term roadmap focuses less on adding every connector immediately and more on improving the depth of the query layer.

That means:

- More GQL features

- Better Cypher pushdown

- Better SQL pushdown

- Deeper integration with Memgraph

- Distributed query execution

- More off-the-shelf connectors

- SaaS for teams that do not want to manage the infrastructure themselves

The connector roadmap follows the same logic. SQL and NoSQL come first because those systems already have storage and query execution. File-based, API-based, and search-oriented sources need more work because they do not all behave like databases.

Key Takeaway 6: Where Memgraph Zero Fits in Real Use Cases

Marko walked through several use cases for Memgraph Zero and MemGQL.

The most direct one is federated GQL across heterogeneous backends. You can run graph queries across systems without first moving all the data into one graph database.

Another use case is public-private data management. For example, a team may want to combine a public dataset with internal enterprise data without copying both into a single store.

Distributed compute is another angle. Since MemGQL can rely on the storage and execution capabilities of target systems, it can use the strengths of those systems instead of trying to replace them.

Then there is enterprise context sharing. This is especially relevant for AI systems. A team might want to combine vector search from one system, graph traversal from Memgraph, and structured records from a relational backend. The goal is not just to retrieve data. It is to assemble the right context.

The last but not certainly not the leasr is agentic access. MemGQL gives agents a single graph interface across multiple systems through MCP. That reduces the need for every agent to discover the same data from scratch.

Key Takeaway 7: MemGQL and Memgraph Are Complementary

If you need real-time graph data access, deep path traversals, graph analytics, or complex algorithms, Memgraph is the right engine.

If you need federated GQL across existing data sources, Memgraph Zero and MemGQL are the right layer.

They solve different parts of the problem. MemGQL needs Memgraph for:

- Real-time graph data access

- Graph analytics

- Deep path traversals

- Complex algorithms

- Graph caching

Memgraph needs MemGQL because no single database can handle every data tradeoff. Teams use different systems for good reasons. MemGQL gives Memgraph a way to integrate with those systems without making copying the default answer.

This is why the two layers work better together. MemGQL can expose data from different systems through one graph interface, while Memgraph can handle the workloads that need fast graph execution, analytics, traversal, or caching.

That gives teams more flexibility. They can leave data in the systems where it already belongs, then use Memgraph where graph-native execution actually matters.

Key Takeaway 8: The Demos Showed MemGQL Across Three Workflows

The session included three demos, and each one mapped to a different part of the product story.

Demo 1: GQL to Cypher

The first demo showed MemGQL running in GQL to Cypher mode against Memgraph.

Marko started Memgraph, started MemGQL, and connected through mgconsole. He created a small graph using a GQL insert statement, which MemGQL translated into Cypher before executing it in Memgraph.

He also showed GQL syntax that needed to be translated into Cypher-compatible form. One example was a WHERE clause inside a pattern expression. Another was a one to three hop path pattern translated into Cypher’s variable length path syntax.

The takeaway: when the backend is already a property graph, MemGQL mainly handles query translation.

Demo 2: Agentic Mapping

The second demo used MySQL and the Sakila dataset.

Structured2Graph connected to MySQL, read the schema and metadata, and generated a graph mapping. Marko used deterministic mode, which keeps literal table and schema names. The mapping produced 12 nodes and 18 edges.

Then MemGQL used that mapping to translate a GQL query over an actor node into SQL against the actor table.

The takeaway: relational data can stay in MySQL while being queried through a graph model.

Demo 3: Multi and Composite Graph Queries

The third demo used multiple active connections with Memgraph and Postgres.

Marko registered two abstract graphs:

devsfor Memgraphsocialfor Postgres

He inserted data into both systems and showed a query that combined results across them.

The takeaway: MemGQL can act as more than a single-backend translator. It can query across multiple graph-shaped sources.

Wrapping Up

Memgraph Zero starts from the practical reality that data is distributed, while the questions you need to ask are connected.

MemGQL is the first step in that product line. It gives you a federated GQL layer for querying existing systems as a graph, without making migration or ETL the first answer.

It is still early in some areas, especially authentication, SQL coverage, and advanced pushdown. But the direction is clear: query data where it lives, model relationships as a graph, and give both developers and agents one structured interface to work with.

Watch the full Introducing MemGQL Community Call recording to see Marko’s architecture walkthrough, demos, roadmap discussion, and complete Q&A.

Q&A

Here’s a compiled list of the questions and answers from the Community Call Q&A session.

Note that these are paraphrased slightly for brevity. For complete details, watch the full Community Call recording.

- Does MemGQL Make Neo4j Queries Faster?

- No, if MemGQL pushes a query down to Neo4j, the performance is still Neo4j’s performance. There is some overhead because data passes through the GQL server before reaching the client, but Marko expects that overhead to usually be small. MemGQL can only change performance characteristics if data is cached or copied. Since avoiding unnecessary copying is the main design goal, caching is something to use only when needed.

- Can MemGQL Handle Enterprise Authentication Today?

- Not yet. Marko said authentication and authorization are not currently supported in MemGQL. Enterprise security needs to be added at the MemGQL layer and then passed through to target systems based on what those systems support. That is a major production consideration.

- Will MemGQL Support Direct SQL Execution?

- Not in the near term. The focus is on GQL first. Marko explained that GQL and SQL are not subsets of each other. They express different things, and some tasks are easier in one language than the other. For MemGQL, the first interface is graph-based because graph modeling fits diverse data sources better than forcing everything into one SQL schema.

- Can You Link Edges Across Graphs in Different Databases?

- In theory, yes. In practice, the mapping layer needs a way to reference entities across systems. Marko said this case was not yet implemented or tested in the demo flow, so it should be treated as future-facing rather than a proven current feature.

- What NoSQL Connectors Are Planned?

- MongoDB is planned and will likely be the first document database connector. Redis was also mentioned as a likely near-term connector. Marko noted that MongoDB has many features, so specific user requirements will matter. The immediate focus is still on improving GQL coverage, Cypher pushdown, and SQL pushdown quality before expanding too far into more backends.

- Are Oracle and SQL Server Connectors Planned?

- Yes, Oracle and SQL Server connectors are planned, but stronger SQL support needs to come first. Marko explained that adding the connector itself should be relatively simple once broader SQL translation coverage is in place. The hard part is not only connecting to the database. It is making sure the GQL to SQL translation layer supports enough of the SQL behavior users actually need.

- Will MemGQL Support CSV and Parquet?

- Yes, but this is harder than supporting SQL or NoSQL systems. CSV and Parquet files do not have query execution attached to them. That means Memgraph Zero needs an execution or caching layer to make them usable in this model. Marko mentioned Parquet as a good future fit because Memgraph can pull data from Parquet and run graph analytics. For very large datasets, such as large Iceberg datasets, this still needs careful management.

- Are Elasticsearch and OpenSearch Planned?

- Likely, yes. Marko mentioned Elasticsearch and OpenSearch as longer-term connector areas because many teams rely on them. MongoDB and Redis are likely earlier priorities, but search systems are difficult to avoid in the long run.

- How Do You Run Native Graph Analytics on External Data?

- Today, you still need to move or cache the relevant data in Memgraph before running native graph analytics. For large external datasets, the practical question becomes how much data to pull, when to cache it, and where to run the graph workload.